Here’s a thought experiment: let’s say you need to buy a car.

Now, let’s say you go to the car dealership, and your salesperson shows you several large cardboard boxes. Aside from the guarantee that each object within each box meets the most basic definition of a car — a person-sized frame surrounding an engine and four wheels — no additional information is given about the status, characteristics or condition of the motorized vehicle inside. Outside of the box are listed prices of differing amounts. You must decide on your purchase before you open the box.

Which box do you pick?

The Car-Buying Thought Experiment

Most people would reject this premise. The immediate problem is obvious: buying a car — sight unseen — based on price tag alone would be a foolish decision for any consumer. One might even argue that price, while important information, is neither particularly descriptive nor (for some) the most relevant information one needs in deciding the quality or fit of the car to your needs. Many cars are available within a consumer’s ideal price range, so price tag alone just isn’t enough to whittle down the possible options to a single choice.

“Fair enough,” says your salesperson. They proceed to add some more information about each car to the front of the box. Now listed are the manufacture year of the car, as well as the mileage: all concrete, objective, rankable numbers that describe the vehicle within.

Now which box do you pick?

Most consumers would still say “none”. Most consumers would take offense to buying a car under such absurd restrictions.

Yet, this is precisely what opponents of holistic review (and affirmative action’s role in this process) would have colleges do when it comes to college admissions.

Car buyers might consider things like price and mileage when they shop for a car, but most express these considerations as part of a larger series of questions related to “fit”: Does this car fit my immediate needs? Does this car fit my predicted long-term goals? The chosen car is not necessarily — or even usually — the “best car”; it is instead summed up as the “best car for me”.

The chosen car for any individual buyer may not necessarily excel in any one aspect; instead it is likely to be the one with the best combination of characteristics that meet the unique interests of the buyer. Some might, for example, be concerned about how easily it will be to move a car seat in and out of the middle back seat. Others might be curious to know how the third row folds back to allow for carting of large items. Then, there’s the appearance of the dash, the number and placement of the cupholders, the exact tinting of the glass. Although these latter considerations might seem frivolous, they are still of utmost importance to the consumer: for a car buyer, a car represents an investment and a commitment.

The point here is that everyone’s criteria for judging a car differs, and every car is judged by those slightly different standards. Thus, while one might be able to use an algorithm to generate a rankable car score based on easily compared numbers like price, mileage and year of manufacture, that calculation holds little practical value when it comes to how people actually buy a car. Most people collect as much information as possible about each possible choice, and compare each option against a mental checklist of important factors.

This is a very informal, mostly subconscious type of holistic review.

This is also, in very broad strokes (and with some extremely important caveats detailed below), how the college admissions’ holistic review system works.

The Disconnect on Holistic Review

Earlier this month, I was chatting with Dr. Oiyan Poon (@spamfriedrice) on the recent anti-affirmative action lawsuits filed by conservative lobbyist Edward Blum and his organization, “Students for Fair Admissions”. We were trying to understand the rationale of the lawsuits, and specifically the disconnect between affirmative action supporters (who view holistic review as fair) and opponents (who view the exact same process as the height of injustice). Here is an excerpt from that conversation:

Oiyan: Blum and (fellow anti-affirmative action thinker, Richard) Kahlenberg’s lawsuits are about the “how”. They argue that holistic review is too willy-nilly, and that it allows for too much bias. But, as a former reviewer, I can say that there are safeguards against bias, that allows for fair application of evaluative criteria. It’s about designing qualitative methods.

Jenn: I liken it to the grant review process.

Oiyan: EXACTLY.

Jenn: Which is a meaningful parallel only to people who deal with grants, but still — grant review is about as non-subjective as a subjective process can be. There will be problems, but this process has ultimately proven to be good at judging disparate, complex things that can’t be outright boiled down to a single set of rankable numbers.

Oiyan: Exactly. Yet, it’s hard to explain this process to the majority of people. Can you think of any other examples of this outside of the academy?

Jenn: Not really. I think part of the problem is that there are very few non-ivory tower analogs to this process, but it’s one that is intimately familiar to academics via the grants review mechanism. Those of us in academia have long-ago adjusted to it. We understand it, and see its strengths. Sure we bitch about grant reviewers, but we know that fundamentally the process is fair. Folks outside of academia aren’t privileged with that experience, and therefore have a harder time innately relating. We need to find the outside-of-academia parallel.

Oiyan: I kinda wish we could have this conversation published on your blog.

Jenn: Why can’t we?

The insights in this exchange are valuable: they begin to identify how a holistic review process that might be familiar to some people might be foreign to others, and that this basic misunderstanding of the process might underlie much of the disconnect. Hence, the car-buying thought experiment.

The strength of this analogy is that it intuits why holistic review has value: cars are very different in shape, size and quality. Even within the same make and model, no two car is exactly the same. The process of buying a car requires us to take often hard-to-quantify information about things that are very different from each other and to find some way to compare them against some sort of common scale of “fit”.

But, there are, of course, many ways in which this analogy also falls apart.

The Problems with the Car-Buying Thought Experiment

Of course, the major problem with this analogy is that college applicants are not cars. We are not manufactured in a factory. We cannot be customized to best suit a university’s needs, as a particularly wealthy car buyer might do. We cannot be returned if buyer’s remorse kicks in.

Cars also don’t have rights, thoughts or feelings; they don’t have preferred buyers and no control over their own appeal. They don’t get hurt when they are rejected. A buyer can compare two similar cars side-by-side. They can bargain a car down to make it more appealing, or choose not to buy a car until next year. None of this is true for college admissions.

Cars are not people. I acknowledge this. That doesn’t mean we can’t learn something about holistic review by thinking about how we buy cars. More importantly, we can use this familiar process to now explore how holistic review can be formalized, made more objective, and made more efficient.

How Grant Review Works

Unlike Oiyan, I have never served as an admissions officer (although I have learned how the process works from admissions officers through my time at Cornell). However, I am very familiar with grant review (having been on all sides of this process). Because the two review mechanisms are similar (and the admissions’ holistic review is described by Oiyan here), I will offer a brief overview of how the grant review process has been developed to judge widely disparate grant applications — all of which will differ in focus, approach, and significance — objectively and fairly using a qualitative holistic review approach.

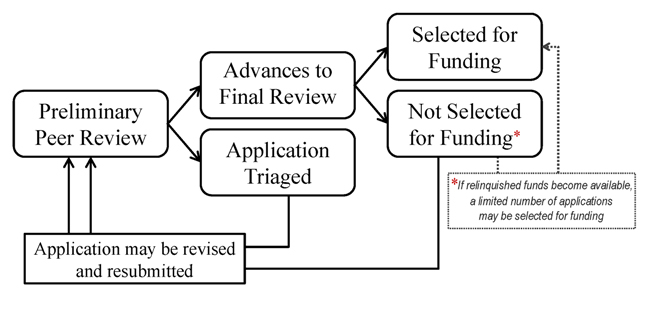

A funding agency starts by randomly dividing up the applications and assigning each to two (or three) reviewers. Reviewers will be given a detailed rubric of considerations by which to judge all aspects of the application — scope, approach, significance, applicant background, etc. This rubric is used to guide the reading and ensure that the application is being considered broadly. Often, the reviewer will be asked to summarize how each application meets each factor in the rubric in short answer format to make sure the factor has been addressed in the reading; there are often hundreds of such factors in the college admissions process. Upon completing the reading, the reviewer assigns a single “priority score” on a scale (e.g. 1 to 5) which reflects the degree to which the reviewer thinks this application meets or excels at all of the reviewed factors. Each application is read and scored blindly relative to any other in the stack.

The priority scores are averaged for every application between its two or three reviewers, and then all average priority scores are ranked. Applications that rank in the bottom percentiles by this initial priority score are rejected (or “triaged”); the cut-off reflects some threshold above which all applications are considered worthy of funding if money weren’t an issue.

The untriaged applications are then re-reviewed in a special meeting of all reviewers. For each application, the two initial reviewers will summarize the application to the entire group and offer thoughts on strengths and weaknesses, and then the entire group will secretly apply their own priority score to the application. Again, this is done blindly relative to all the other applications. The average of this new priority score is then used to re-rank this set of applications; some top percentile of applicants are then offered funding based on availability of money.

The value of this system is that it permits objective comparison of applications that cannot be readily judged by quantifiable metrics. It further underscores a basic truth: applications that survive triage but are not funded are not “less qualified” than the funded applications.

Parallels to College Admissions and the Affirmative Action Debate

Armed with the understanding of how a holistic review process works, we can now consider how this process is often misinterpreted with regard to college admissions by opponents of affirmative action and holistic review.

In holistic review, race and ethnicity cannot be used determinatively. Applicants are never considered side-by-side. No one is “passed over”, or is ever “giving up their slot”, in favour of a minority applicant. Minority applicants aren’t unqualified or underqualified; all that are subjected to secondary review are above the cutoff threshold for basic qualification. Admittance decisions aren’t determined until after all applicants are scored and ranked, so there’s no point in a reasonable holistic review process when a cap quota might be applied.

Opponents also assert that racial information is not a measure of applicant merit. What these opponents forget is that colleges are tasked not with rewarding applicants (just as consumers are not tasked with rewarding car manufacturers) but with identifying the “best fit” applications. The Supreme Court has long upheld the “compelling interest” that colleges and universities have in addressing campus diversity; consequently, information that speak to student diversity — including but not limited to race and ethnicity — is relevant assessing “fit”. To argue removal of this information in the admissions process is to fundamentally argue that campus diversity is not a compelling interest for colleges and universities.

Ultimately, many Asian American opponents of affirmative action (who are incidentally a minority of all Asian Americans) chafe at the idea that Asian American applicants who score highly on standardized tests may not receive admission to their top choice schools. That is, indeed, the situation of the plaintiff in Blum’s lawsuit against Harvard alleging anti-Asian bias in the process. What these opponents forget is that standardized tests are only one component of an applicant’s application package, and isn’t even the most important factor considered in holistic review.

What these opponents forget is that an example of a high-scoring applicant who receives a low priority score is not de facto evidence of bias; it may also be nothing more than evidence that holistic review exists and it is successfully considering applicants holistically and not by quantifiable factors alone.

Resenting or disparaging applicants who receive admittance based on the qualifications of those that don’t fundamentally — and immaturely — misunderstands the holistic review process.

Moreover, Edward Blum’s anti-affirmative action lawsuits are equivalent to Honda suing you because you decided to buy a Toyota Prius.

Read More: Oiyan discusses the specifics of college admissions and how it works.

Act Now! Sign this petition to oppose Edward Blum’s anti-affirmative action lawsuits.